Objective

Time benchmarks of Fast R-CNN during inference were showing that large portion of time is taken by region proposals. E.g. Test time is 2.3 seconds with region proposals (using a fixed function like Selective Search which computes 2000 region proposals) and 0.32 seconds without. SPPnet was also exposing region proposal computation as a bottleneck. So the problem with Fast R-CNN and SPPnet is that runtime is dominated by computing region proposals.

Faster R-CNN is solving this issue by making CNN itself predicting its own region proposals. It eliminated the overhead from computing region proposals outside the network.

Faster R-CNN proposal was published in June 2015 (last revision was in January 2016) in paper [1506.01497] Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks.

Network Architecture

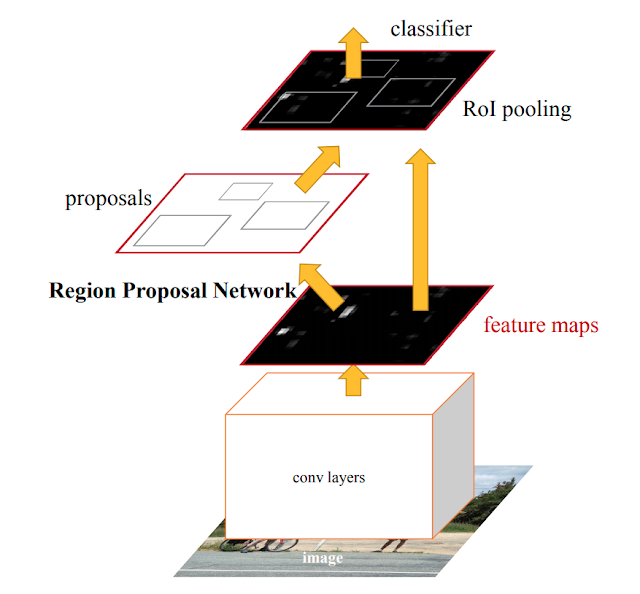

Entire input image is run through some convolutional layers to get some convolutional feature map

representing the entire high resolution image. This is the output of the last convolutional layer and contains detections of high-level features (shapes and objects).

| ||

Faster R-CNN network.

Image credit: Shaoqing Ren, Kaiming He, Ross Girshick, and Jian Sun: "Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks"

|

There's now a separate Region Proposal Network (RPN) which is a fully convolutional network that uses convolutional features from a map to simultaneously predict:

- object bounds (region proposals)

- objectness scores (is it an object or a background?) at each position from those convolutional features

Bounding boxes are now not calculated but hypothesized (predicted).

RPN takes an image of arbitrary size to generate a set of rectangular object proposals. RPN operates on a specific conv layer with the preceding layers shared with object detection network => Region Proposal Network acts in a nearly cost-free way by sharing full-image conv features with detection network.

RPN introduces the term called anchor box. RPN uses sliding window to go across the feature map and in each slide/position/crop it selects k (e.g. 9) rectangles of various aspect ratios with centre points being in the centre of the slide. These rectangles are anchor boxes. They are like potential bounding boxes. Some of them will be promoted into a bounding box at the end of the process.

RPN has classifier and regressor.

For each anchor box it predicts:

RPN introduces the term called anchor box. RPN uses sliding window to go across the feature map and in each slide/position/crop it selects k (e.g. 9) rectangles of various aspect ratios with centre points being in the centre of the slide. These rectangles are anchor boxes. They are like potential bounding boxes. Some of them will be promoted into a bounding box at the end of the process.

RPN has classifier and regressor.

For each anchor box it predicts:

- 2 objectness scores (object or background); classifier

- 4 coordinates - regressor

|

| Region Proposal Network used in Faster R-CNN. Image credit: Shaoqing Ren, Kaiming He, Ross Girshick, and Jian Sun: "Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks" |

We can say that:

Faster R-CNN = RPN + Fast R-CNN

Once we have those predicted region proposals then network looks just like fast R-CNN where now we take crops from those region proposals from the convolutional features, pass them up to the rest of the network.

Loss Function

Network is now doing four things at once so we'll have four-way multi-task loss.

Region proposal network does two things:

- for each potential proposal it does binary classification and tells if region contains (any) object or not => Binary Classification Loss

- performs regression to find the bounding box coordinates for each of those proposals => Bounding box Regression Loss

The final network at the end also does these two things again:

- makes final classification decisions for what are the class scores for each of these proposals => Classification Loss

- predicting final box coordinates; the second round of bounding box regression to again correct any errors that may have come from the region proposal stage => Bounding box Regression Loss

Training

How is RPN trained?

The idea is that at any time you have a region proposal which has more than some threshold of overlap with any of the ground truth objects then you say that that is the positive region proposal

and you should predict that as the region proposal mand any potential proposal which has very low overlap with any ground truth objects should be predicted as a negative.

Shortcomings

Faster R-CNN belongs to Region-based methods for object detection. In this family of methods there's some kind of region proposal and then we're doing some independent processing (pooling and then classification) for each of those potential regions, sequentially.

That works well but it’s also quite slow (~7 fps) as it requires running the detection and classification portion of the model multiple times.

While accurate, these approaches have been too computationally intensive for embedded systems and, even with high-end hardware, too slow for real-time applications.

Often detection speed for these approaches is measured in seconds per frame (SPF), and even the fastest high-accuracy detector, Faster R-CNN, operates at only 7 frames per second (FPS).

Significantly increased speed comes only at the cost of significantly decreased detection accuracy.

References:

[1506.01497] Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks

Lecture 11 | Detection and Segmentation - YouTube

Object Detection for Dummies Part 3: R-CNN Family

computer science - faster-RCNN,why don't we just use only RPN for detection? - Mathematics Stack Exchange

Faster R-CNN for object detection - Towards Data Science

A Step-by-Step Introduction to the Basic Object Detection Algorithms (Part 1)

Understanding Object Detection - Towards Data Science

Region Proposal Network (RPN) — Backbone of Faster R-CNN

Faster R-CNN Explained - Hao Gao - Medium

deep learning - Anchoring Faster RCNN - Cross Validated

No comments:

Post a Comment